ENTRY 11/19/22

Privacy as a human right in the face of growing technological dependence

Read time: 18 minutes

Introduction

I was born into an age where I do not own my soul. Cursed by a nonconsensual faustian pact that preceded my first breath. On October 23rd, 2000 Google announced AdWords™ (Google) marking the genesis of a new type of digital industry based on exploiting the habits and psychology of unsuspecting people. A year later, following the 9/11 attacks, on October 26th, 2001, President Bush enacted the Patriot Act (U.S DoJ). These two events radically changed the world I would end up growing up in before I even had a name.

Surveillance has only become more prevalent as I have grown up, shaping a generation of my peers. The technology used to monitor, record and predict billions of people has only grown more powerful and omnipresent. Your cell phone, cars’ GPS, retail CCTV cameras, RFID chips and many other widespread technologies all work as instruments to invade, tabulate and commercialize your life. As this threat grows, understanding the ethical implications of surveillance and our right to privacy is vital to rouse awareness and change.

Sonder is a neologism coined by John Koenig on his website The Dictionary of Obscure Sorrows defined as;

The realization that each random passerby is living a life as vivid and complex as your own—populated with their own ambitions, friends, routines, worries and inherited craziness (Koenig).

As my life has continued on, I feel the spread of sonder has diminished as the world traverses face down, towards their glowing mirrors. It is a rarity to look somebody in the eye and see the complexities that built them into the individual I stand in front of, instead we envelop ourselves in the stereotypes and effigies that devices show us, killing the individual behind the screen for a mere representation. Social media titans have reduced us into data points to maximize profits, but they have also reduced the individual within the content they propagate. As we scroll through thousands of images of friends and family, we scroll through snapshots of their lives that fit into a false archetype, an idealized album of their lives that revokes their humanity and only leaves an impression. Mass surveillance goes beyond simple paranoia and principle; it is the death of sonder, the commodification of society. This essay will analyze the birth, development, growth and moral implications of mass surveillance from both the commercial and governmental sector. Defining Privacy will address the multiple aspects of privacy and their psychological and moral importance to provide a pragmatic basis for the moral concerns regarding the infringement of privacy. Symbiosis de Rigueur explores our ever-growing societal dependence on technology for socio-economic survival in the modern age to emphasize the inescapability of mass surveillance. Building The Panopticon investigates the origin and growth of surveillance behemoths. Invisible Chains examines the societal implications of mass surveillance use for direct psychological targeting and manipulation. Faux Emancipation addresses how privacy can be protected in the modern world.

Defining Privacy

Understanding the ethical implications of surveillance requires that its antithesis - the right to privacy be properly understood. The IAPP elegantly addresses two forms of privacy with the definition;

Symbiosis de Rigueur

These words will most likely never exist on paper. Every aspect of our lives has been digitized, The Information Age sparked a rebirth in how society operates on a fundamental level. However, this renewal is mandatory, technological literacy and connection is essential for socio-economic survival and success in the modern age. The internet is now embedded in the core aspects of our participation in society; the educational, occupational, social, political, and other aspects of life all depend on compliance with internet titans. Short of life in the woods, there is no escape from the grasp of the machine. At the heart of this assimilation are billion-dollar corporate giants who extort this dependence to incline the world to surrender their privacy. Understanding the world's reliance on technology provides insight into the stratagem of these organizations. This section will address two societal pieces that corporations envelop and commandeer to grow their empire.

Life

As of October 2022, nearly 76% of all desktop computers run Microsoft Windows (StatCounter). According to Microsoft, that corresponds to 1.4 billion active devices running Windows 10 or 11 (Microsoft). The ubiquity of Microsoft's operating system stays consistent despite outcries regarding Microsoft's suspicious privacy policy and data collection. A 2015 Newsweek article by Lauren Walker analyzes the privacy policy of Windows 10 during its release highlighting that “your typed and handwritten words are collected," (qtd. In Walker) and the companies mention usage data being utilized for targeted advertising. Microsoft's ability to get away with these privacy invasions is their pure market dominance. People are familiar with Windows and aren’t aware of the existence of alternative operating systems. Monopolizing the operating system market is taking ownership of people's digital lives.

Everything is connected. Internet of things (IoT) devices have continued to grow more popular in the United States with “smart” security cameras, fridges, speakers, and a myriad of other appliances. More than 70% of American homes have an IoT device (Kumar). Coinciding with this modern preference towards internet-based utility devices is the total concession of genuine control over these appliances. For example, during a heat wave, Xcel Energy remotely adjusted and locked access to thousands of smart thermostats to provide relief on the power grid (Campbell-Hicks). Regardless of the ethics of this action, it signifies an important condition of these devices - you are not in control.

Education On my first day of junior high, I was given a Google Chromebook by the school district. From that point forward, the majority of my schoolwork and assignments were entirely digital, my teachers and I relied entirely on the Google ecosystem. Even now, with my knowledge of Google's intrusion into my life, I still use their products because they have ingrained their software into my life since I was young. Their products are familiar to me; so like many others, I let them get away with eavesdropping. Google has cemented itself in the world of K12 education with its Chromebook products. According to the New York Times in 2017, over 30 million children use Google’s services for education (Singer). While this outreach from Google is a wonderful stride for education opportunities, it comes at a cost - brand loyalty. The same New York Times article later quotes a high school notice urging students to convert their school Google accounts to personal accounts (Singer) signifying the effectiveness of growing brand loyalty through the use of Google’s products in the classroom. If students continue to rely on Google's products into adulthood, those millions of children become millions of new people to observe and advertise to.

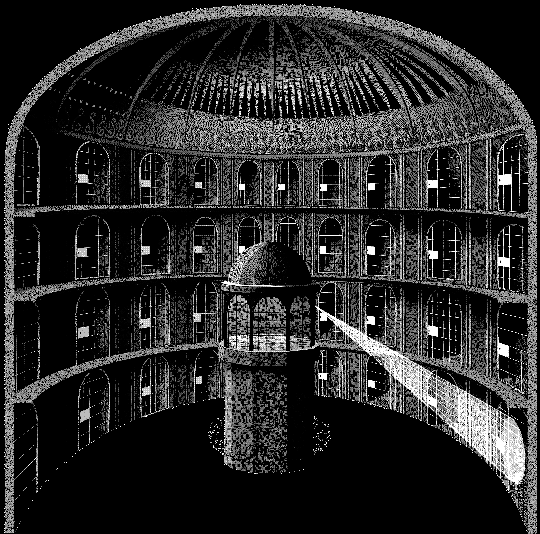

Building The Panopticon

The panopticon is a prison design by Jeremy Bentham with a central guard tower that can see into every prison cell, but the inmates cannot see into the guard tower - unable to know if they are being watched. Today, the panopticon serves as a powerful metaphor for the state of digital surveillance. Government agencies and corporations alike look into every aspect of our lives, but we are unable to look back (Ethics Centre). A panopticon is not built overnight, and neither is a data empire. The development of a surveillance-enveloped world was a slow, meditated descent as a result of continued willful ignorance, extenuation, and bewilderment.

The panopticon is a prison design by Jeremy Bentham with a central guard tower that can see into every prison cell, but the inmates cannot see into the guard tower - unable to know if they are being watched. Today, the panopticon serves as a powerful metaphor for the state of digital surveillance. Government agencies and corporations alike look into every aspect of our lives, but we are unable to look back (Ethics Centre). A panopticon is not built overnight, and neither is a data empire. The development of a surveillance-enveloped world was a slow, meditated descent as a result of continued willful ignorance, extenuation, and bewilderment.

In 2009, Google launched its “interest-based” advertising beta, marking the start of a new paradigm of advertising technology. Prior to this release, Google’s advertisements were simply based on relevance to the current search terms, for example, “if you search for [digital camera] on Google, you'll get ads related to digital cameras”. With the release of “interest-based” advertising, Google created a persistent record of users' interest based on their search and webpage engagement history (Wojcicki). While far from the complex algorithmic advertisement systems that are now associated with Google, this development was the groundwork for the surveillance and bookkeeping aspect of Google’s business model. From 2009 onwards, users' activity is being followed and catalogued. Now, Google has trackers ingrained in 85.6% of the top 50 thousand websites (DuckDuckGo). The walls of Google’s panopticon are not bound by their own services, Google has unblinking eyes scattered across the entirety of the internet.

If the digital panopticon had a guard tower, it would be the Utah Data Center. The Utah Data center is a one-million-square-feet facility owned and operated by the NSA that became a symbol of government surveillance following the leaks from Edward Snowden. Some estimates claim that the Utah Data Center could hold zettabytes (one billion terabytes) of information (Gorman "Meltdowns Hobble"). All this storage is for one purpose, recording the phone calls and online activity of American citizens. The development of the NSA’s mass surveillance program as characterized by the Utah Data Center can be largely attributed to post-9/11 surveillance acceptance under the Bush administration. According to the New York Times, “Months after the Sept. 11 attacks, President Bush secretly authorized the National Security Agency to eavesdrop on Americans and others inside the United States to search for evidence of terrorist activity without the court-approved warrants.” (Risen). These immediate actions directly after the 9/11 attacks were a precursor of the massive government surveillance program unmasked by the Snowden leaks in 2013. Snowden's leaks unveiled a complex network of government surveillance programs, tools, and corporate cooperation. An extensive list compiled by Business Insider provides summaries of key information from Snowden’s “7,000” leaked documents. Notable items from this list include the NSA’s backdoor into Google and Facebook; mass monitoring of American and German citizens' emails and phone calls; and NSA-developed malware to infect computers and steal files (Szoldra). These actions are a minuscule excerpt of the many privacy encroachments performed by the NSA into domestic and international lives.

However, the most ethically jarring aspect of these programs stems from agents' personal and political use of surveillance software. One disturbing example is “LOVEINT”, where agents utilized surveillance tools to stalk love interests and partners (Gorman "NSA Officers"). Another example is the use of spies on negotiators during a 2009 climate summit (Sheppard). These applications overstep the “antiterrorism” veneer of government surveillance and reveal the malicious nature of such programs. Regardless of intent, any tool this powerful will be abused. There is no way to trust that every agent and every official has pure intentions. Surveillance is omnipresence; even separate from monetary goals, there always lies the likelihood of abuse.

Invisible Chains

When portraying surveillance, it is important to highlight that the party on the other end of the camera is not an indifferent statistician. The goal of surveillance is not simple analytics, it is a tool for manipulation. The ethical significance of digital surveillance spans beyond the simple right to oneself, but the right to self-determinism, the right to be oneself.

An extremely powerful example of this surveillance tenet is social media websites. Modern social media platforms have three main goals; captivate, extract and manipulate. These three pillars are an interdependent and self-sufficient system to keep users addicted to a platform in order to extract more and more information about their psychology. This information can then be utilized for better-optimized content to keep the user ensnared by the program. Social media platforms have created invisible chains that constrain and puppeteer the lives of billions.

Addiction

A 2018 report by the Global Web Index found that people spend an average of 2 hours 22 minutes on social media platforms per day ("Gwi Audience Insight"). This massive time apprehension by these platforms was cultivated with a stream of practices all aimed at one goal, addiction. The addictive nature of social media has become fairly common knowledge, and the ways in which these platforms keep people psychologically hooked is an extremely dense and guarded topic. However, understanding some of the general principles and techniques utilized by these platforms grants insight into how people’s psychology is being continually exploited by these corporations to maximize profits. Afterall, keeping people on the app longer means a better stream of psychological data points and a longer period to serve advertisements. Modesty is not a trait of capitalism, all sources of profit must be intensified, including the exhibition of the mind.

One prominent sirenic technique of social media platforms is the use of an “infinite scroll” where new content is continually fetched as the user scrolls, creating no apparent end. Infinite scroll has two unique functions in user engagement; immersion and conditioning. With no stop to the stream of content, the user is completely immersed in the platform and thus has no instance for contemplation; creating a habitual sphere of mindless scrolling. When the user finally lands on a piece of content they enjoy, it acts as an intermittent reward for their scrolling behavior. This delayed reward while scrolling acts as intermittent conditioning to sustain the user's attention, comparable to a slot machine (Montag).

Social media naturally exploits one of humanity's defining characteristics, the fact that we are social animals. With the psychological need to socialize comes anxiety commonly known as the “fear of missing out”, abbreviated to FOMO. Considering that social media provides a window into the lives of your peers, it is unsurprising that FOMO and social media have a strong correlation that can be exploited for user engagement. A 2016 research article by Jessica Abel finds a correlation between people who experience higher reported levels of FOMO and social media usage. This research article furthers their discussion by identifying a possibility for a FOMO measurement being utilized by social media platforms as a tool to “utilize these feelings to drive purchase intentions and better understand consumers’ motivations. With a deeper understanding of the relationship between social media habits and a consumer’s degree of FOMO, marketers may have the ability to incorporate consumers’ desire to belong as a motivational tool to purchase a product or seek out additional information.” (Abel).

These are just two of the many ways social media companies exploit the human mind to drive up engagement numbers. While these techniques, among many others, are unsettling from a humanitarian and ethical standpoint, they are just the locks on the chains. The moral implications of this technology grow more perturbing when we are to consider what these corporations do with billions of cyberjunkies.

Extraction

In her book, The Age of Surveillance Capitalism Shoshana Zuboff outlines the concept of “behavioral surplus.” In short, behavioral surplus is the clandestine behavioral data of users collected by internet services that can be analyzed for profiteering. As companies such as Google adopted an advertising-based business model, the maximization of behavioral surplus accumulation became quintessential to the company's operation and future. The essentiality of behavior surplus stems from its use in formulating predictive patterns that allow for precise user-targeting for advertisements (Zuboff).

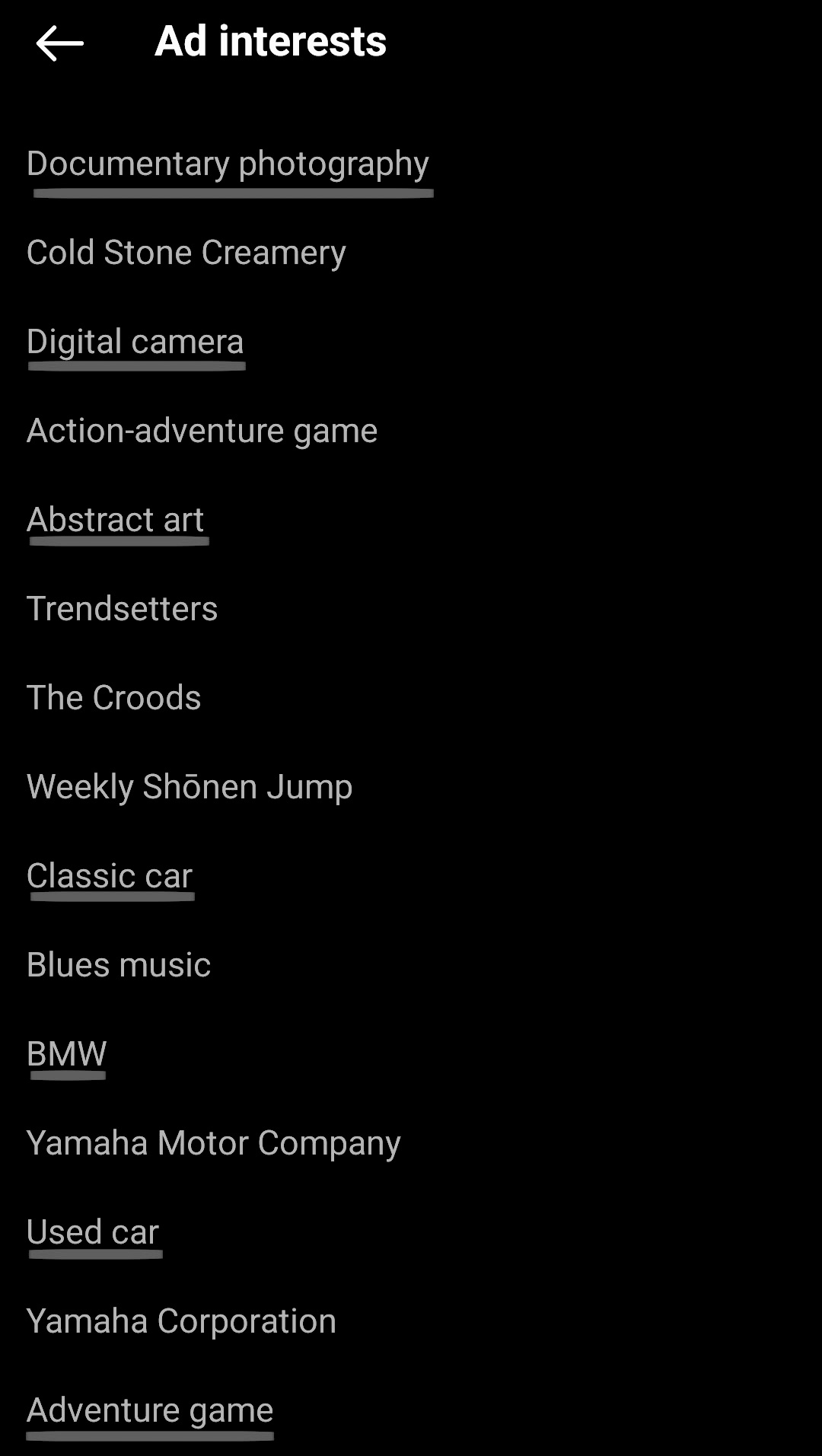

Navigating into my personal Instagram, I can retrieve a massive list of keywords linked to my account that Instagram uses to match advertisements to me. The underlined are tags accurate to my interests. This is a mere excerpt of the massive list of items Instagram associates with my activity, I never told them explicitly that I like cars or abstract art. What is more frightening is this is the data Instagram is willing to admit they possess, I have absolutely no idea how their algorithm utilizes this list or the hundreds of other data points maintained about my life.

Instagram's terms of service explicitly state “You give us permission to show your username, profile picture, and information about your actions (such as likes) or relationships (such as follows) next to or in connection with accounts, ads, offers, and other sponsored content that you follow or engage with that are displayed on Meta Products, without any compensation to you. For example, we may show that you liked a sponsored post created by a brand that has paid us to display its ads on Instagram.” (Instagram). This statement is extremely broad and provides little insight into the ways in which Instagram analyzes the psychology of its users. Even with very little information, these algorithms can build a distressingly accurate model of a person. A 2015 research article boldly titled “Computer-based personality judgments are more accurate than those made by humans” by Wu Youyou demonstrated that using solely Facebook “likes” as data for a computer algorithm provided more accurate personality predictions for an individual compared to human judgment. Most alarming out of this research was the observation that “judge agreement; and (iii) computer personality judgments have higher external validity when predicting life outcomes such as substance use, political attitudes, and physical health; for some outcomes, they even outperform the self-rated personality scores.” (Youyou). These predictions were done using just the “like” data from 86 thousand volunteers, Facebook has billions of active users providing a lot more information than just likes. The entirety of these companies' business models relies on building the best models of their users. If this degree of accuracy can be achieved with only an individual's “liked” content, the actual accuracy of the deployed algorithms must be mystic.

Manipulation

Advertising has always been a form of mind control. However, never before in the history of advertising have consumers' proclivities and personalities been digitized on an individual basis. According to Mckinsey & Company; “76% of consumers are more likely to consider purchasing from brands that personalize.” ("The Value Of"). From a purely financial standpoint, the power of surveillance-based marketing is clear. However, the strength of individual psychological analysis extends beyond simple marking. Individual tailoring is personal propaganda, the puppeteer behind the invisible chains, plucking the subconscious for every profitable desire. The horror of behavioral surveillance is that it’s not just a mirror, it’s a remote. Users’ souls are at the mercy of shareholders, and profiteering sees independence as a liability.

Manipulation goes beyond advertising, the tools of psychological profiling have become a key asset in influencing political outcomes. At the heart of recent political campaign controversy is Cambridge Analytica, a self-proclaimed analytics firm accused of influencing the 2016 election and the Brexit campaign. Cambridge Analytica’s influence came from its ability to utilize psychological profiling to create tailored political messaging that appealed to an individual's biases and beliefs. Additionally, their technology allowed for “real-time” modification of the tailored content based on the individual's reaction to improve impact (Risso). The aptitude of Cambridge Analytica’s algorithms grew from the data of millions of Facebook users, albeit underhandedly. Utilizing a “personality app under the guise of academic research” Cambridge Analytica harvested the data of 50 million Facebook users (Lewis). Psychological tailoring is responsible for much more than selling products, it has become a tool for the exploitation of democracy by pulling the strings of its etymological and political root - the people.

A commonly discussed unintended consequence formed by the societal reins of social media in combination with their core methodology of influence is the exacerbation of political polarization and extremism. This growth in polarization is largely attributed to social media fostering “echo chambers”. An echo chamber is an environment in which people interact only with like-minded individuals to form a shared narrative. Social media contributes to echo chambers via their algorithms' inherent tendency to promote content coinciding with an individual's preferences (Cinelli). This algorithmic content promotion forms a confirmation bias feedback loop that will eventually surround the user solely in information that reinforces their worldview, regardless of credibility. The lack of opposing information leads to the belief that one's views are concrete and commonsensical, damaging the chance for debate and consensus and fueling ideological polarization.

Faux Emancipation

A common call to action in arguments against corporate and government surveillance is a request for regulation and political action. This is not enough. These primitive proposals are a farce deus ex-machina to veil backpedaling into corporate submission. According to the Washington Post, “Amazon, Facebook, Google, and four other top technology giants spent more than $65 million to lobby the U.S. government last year, shelling out record-breaking amounts in some cases to try to battle back antitrust scrutiny and new regulatory threats.” (Romm). Lobbying efforts of such a high caliber are a testament to these corporations' political dominance. Blunty, money speaks louder than beseechment. To believe in change simply through petition is a belief in faux emancipation.

Our lives have become part of a memetic vivarium for large corporations who control the pipelines of our social connections. There is no better way to control what someone thinks than to make their peers believe the same thing. Nobody is immune to this propagandic leviathan, officials cannot be trusted to defend privacy when they are also under the influence of such a technology. The only solace is personal protection.

The beauty of the internet is its ability to propagate information, including articles and resources created by privacy-conscious individuals. Lingering in the shadow of internet giants are software alternatives that provide privacy-respecting implementations of everyday digital tools. For example, Startpage is a search engine that anonymizes and “forgets” search queries. Additionally, blogs such as spyware watchdog and todsr analyze the privacy policies and network activity of various programs to provide a simplified determination of the risk of mainstream software. The internet may be ingrained into our lives, but surveillance software doesn’t have to be.

The emergence of past revolutions began with a single voice that became many. However, a revolution against the digital puppeteer will begin with the silence of a single user among the cacophony. True emancipation comes from the silence of the mind, breaking the invisible chains at every junction. Internet liberty will stem from blinding the oppressors by spreading awareness and becoming more privacy-conscious.

Endnotes

Works Cited

Click To Expand

Campbell-Hicks, Jennifer. “Xcel Took over Thermostats of Thousands of Customers for the First Time.” KUSA.com, 9 News, 1 Sept. 2022, www.9news.com/article/tech/xcel-took-over-smart-thermostats/73-848b81bc-6194-4def-9015-97592e9043af.

Cinelli, Matteo, et al. “The Echo Chamber Effect on Social Media.” Proceedings of the National Academy of Sciences, vol. 118, no. 9, 2021, doi:10.1073/pnas.2023301118.

Data Justice – CMS/PL 312 Social Media, and Screens In The Digital Age – Ben's Blog. “Ethics Explainer: The Panopticon - What Is the Panopticon Effect?” THE ETHICS CENTRE, 14 Dec. 2021, https://ethics.org.au/ethics-explainer-panopticon-what-is-the-panopticon-effect/#:~:text=The%20panopticon%20is%20a%20disciplinary,not%20they%20are%20being%20watched.

“Desktop Operating System Market Share Worldwide.” StatCounter Global Stats, StatCounter, https://gs.statcounter.com/os-market-share/desktop/worldwide#monthly-202111-202210.

“DuckDuckGo Tracker Radar Exposes Hidden Tracking.” Spread Privacy, Spread Privacy, 15 July 2022, https://spreadprivacy.com/duckduckgo-tracker-radar/.

“Google Launches Self-Service Advertising Program.” News from Google, Google, 23 Oct. 2000, https://googlepress.blogspot.com/2000/10/google-launches-self-service.html.

Gorman, Siobhan. “Meltdowns Hobble NSA Data Center.” The Wall Street Journal, Dow Jones & Company, 8 Oct. 2013, www.wsj.com/articles/SB10001424052702304441404579119490744478398.

Gorman, Siobhan. “NSA Officers Spy on Love Interests.” The Wall Street Journal, Dow Jones & Company, 24 Aug. 2013, www.wsj.com/articles/BL-WB-40005.

“Gwi - Audience Insight Tools, Digital Analytics & Consumer Trends.” Globalwebindex, 2018, www.gwi.com/hubfs/Downloads/Social-H2-2018-report.pdf.

Klitou, Demetrius. “Privacy-Invading Technologies and Privacy by Design.” Information Technology and Law Series, 2014, doi:10.1007/978-94-6265-026-8.

Koenig, John. “Sonder.” The Dictionary of Obscure Sorrows, 22 July 2012, www.dictionaryofobscuresorrows.com/post/23536922667/sonder.

Kumar, Deepak, et al. “All Things Considered: An Analysis of {IOT} Devices on Home Networks.” USENIX, 14 Aug. 2019, www.usenix.org/conference/usenixsecurity19/presentation/kumar-deepak.

Lewis, Paul, and Julia Carrie. “Facebook Employs Psychologist Whose Firm Sold Data to Cambridge Analytica.” The Guardian, Guardian News and Media, 18 Mar. 2018, www.theguardian.com/news/2018/mar/18/facebook-cambridge-analytica-joseph-chancellor-gsr.

“Microsoft by the Numbers.” Stories, Microsoft, https://news.microsoft.com/bythenumbers/en/windowsdevices.

Montag, Christian, et al. “Addictive Features of Social Media/Messenger Platforms and Freemium Games against the Background of Psychological and Economic Theories.” International Journal of Environmental Research and Public Health, vol. 16, no. 14, 2019, p. 2612., doi:10.3390/ijerph16142612.

“Preserving Life and Liberty.” Life and Liberty Archive, U.S Department of Justice, www.justice.gov/archive/ll/archive.htm

Risen, James, and Eric Lichtblau. “Bush Lets U.S. Spy on Callers without Courts.” The New York Times, The New York Times, 16 Dec. 2005, www.nytimes.com/2005/12/16/politics/bush-lets-us-spy-on-callers-without-courts.html.

Risso, Linda. “Harvesting Your Soul? Cambridge Analytica and Brexit.” Brexit Means Brexit?, 6 Dec. 2017, pp. 75–87. https://www.adwmainz.de/fileadmin/user_upload/Brexit-Symposium_Online-Version.pdf#page=75

Romm, Tony. “Amazon, Facebook, Other Tech Giants Spent Roughly $65 Million to Lobby Washington Last Year.” The Washington Post, WP Company, 22 Jan. 2021, www.washingtonpost.com/technology/2021/01/22/amazon-facebook-google-lobbying-2020/.

Sheppard, Kate, and Ryan Grim. “Snowden Docs: U.S. Spied on Negotiators at 2009 Climate Summit.” HuffPost, HuffPost, 30 Jan. 2014, www.huffpost.com/entry/snowden-nsa-surveillance_n_4681362.

Singer, Natasha. “How Google Took over the Classroom.” The New York Times, The New York Times, 13 May 2017, www.nytimes.com/2017/05/13/technology/google-education-chromebooks-schools.html.

Szoldra, Paul. “This Is Everything Edward Snowden Revealed in One Year of Unprecedented Top-Secret Leaks.” Business Insider, Business Insider, 16 Sept. 2016, www.businessinsider.com/snowden-leaks-timeline-2016-9.

“Terms Of Use.” Instagram, Instagram, https://help.instagram.com/581066165581870.

“The Value of Getting Personalization Right--or Wrong--Is Multiplying.” McKinsey & Company, McKinsey & Company, 7 Dec. 2021, www.mckinsey.com/capabilities/growth-marketing-and-sales/our-insights/the-value-of-getting-personalization-right-or-wrong-is-multiplying.

Walker, Lauren. “Using Windows 10? Microsoft Is Watching.” Newsweek, Newsweek, 11 Apr. 2016, www.newsweek.com/windows-10-recording-users-every-move-358952.

“What Is Privacy?” What Is Privacy, IAPP, https://iapp.org/about/what-is-privacy/.

Wojcicki, Susan. “Making Ads More Interesting.” Official Google Blog, 11 Mar. 2009, https://googleblog.blogspot.com/2009/03/making-ads-more-interesting.html.

Youyou, Wu, et al. “Computer-Based Personality Judgments Are More Accurate than Those Made by Humans.” Proceedings of the National Academy of Sciences, vol. 112, no. 4, 2015, pp. 1036–1040., doi:10.1073/pnas.1418680112.

Zuboff, Shoshana. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. Public Affairs, 2020.

Not Cited, Further Reading

Click To Expand

Albakjaji, Mohamad, and Manal Kasabi. “The Right to Privacy from Legal and Ethical Perspectives.” Journal of Legal, Ethical and Regulatory Issues, Allied Business Academies, 18 Oct. 2021, www.abacademies.org/articles/the-right-to-privacy-from-legal-and-ethical-perspectives-12942.html#:~:text=According%20to%20this%20view,%20there,of%20privacy%20is%20clearly%20manifested.

Another essay that helped spark my research into the ethical importance of privacy.

Macnish, Kevin. “Surveillance Ethics.” Internet Encyclopedia of Philosophy, https://iep.utm.edu/surv-eth/.

An in-depth analysis of the ethical implications of surveillance on a historical and philosophical basis. Was an amazing resource for my personal understanding of the importance of privacy on a political level.

Invisible Chains

Dwoskin, Elizabeth. “Lending Startups Look at Borrowers' Phone Usage to Assess Creditworthiness.” The Wall Street Journal, Dow Jones & Company, 1 Dec. 2015, www.wsj.com/articles/lending-startups-look-at-borrowers-phone-usage-to-assess-creditworthiness-1448933308.

Startups developing surveillance software that analyzes users behavior to evaluate their creditworthiness. Based on extremely personal observations such as the number of texts you send.

Ertemel, Adnan Veysel, and Gökhan Aydın. “Technology Addiction in the Digital Economy and Suggested Solutions.” ADDICTA: THE TURKISH JOURNAL ON ADDICTIONS, 2018, doi:http://dx.doi.org/10.15805/addicta.2018.5.4.0038.

General analysis of technology addiction with suggestions on how to curb it at various levels.

Keenan, Spencer J. “Behind Social Media: A World of Manipulation and Control.” Scholar Commons, 2020, scholarcommons.scu.edu/engl_176/48/.

A significantly more in-depth analysis of social media's “manipulation” aspect. An excellent starting point for my research for “Invisible Chains”.

Qin, Yao, et al. “The Addiction Behavior of Short-Form Video App TikTok: The Information Quality and System Quality Perspective.” Frontiers in Psychology, vol. 13, 2022, doi:10.3389/fpsyg.2022.932805.

Research into the addictive properties of TikTok.

Misc

“What ‘the Social Dilemma’ Gets Wrong - about Facebook.” Facebook, Facebook, https://about.fb.com/wp-content/uploads/2020/10/What-The-Social-Dilemma-Gets-Wrong.pdf Facebook's response to the Netflix Documentary “The Social Dilemma” with the comical opening statement that the documentary “Gives a distorted view of how social media platforms work to create a convenient scapegoat for what are difficult and complex societal problems.”

Serves as a generally interesting piece of damage control by the company.